A small team of researchers at the University of California, Berkeley have developed a robot dog to help in ways similar to real guide dogs. Their robot guide dog and have uploaded it to the arXiv preprint server. They have also posted two videos demonstrating the capabilities of their robot on YouTube.

Guide dogs are very useful to people who are blind or have low sight, of that there is no doubt. But they have their limitations. The first is that it takes a lot of time and money to train a dog, leaving many people on long waiting lists or unable to afford them at all. Another drawback with guide dogs is their inability to read a map and then use it to navigate to the desired location. In this new effort, the researchers have developed a robot dog that is able to carry out the duties of a live guide dog as well as provide additional services.

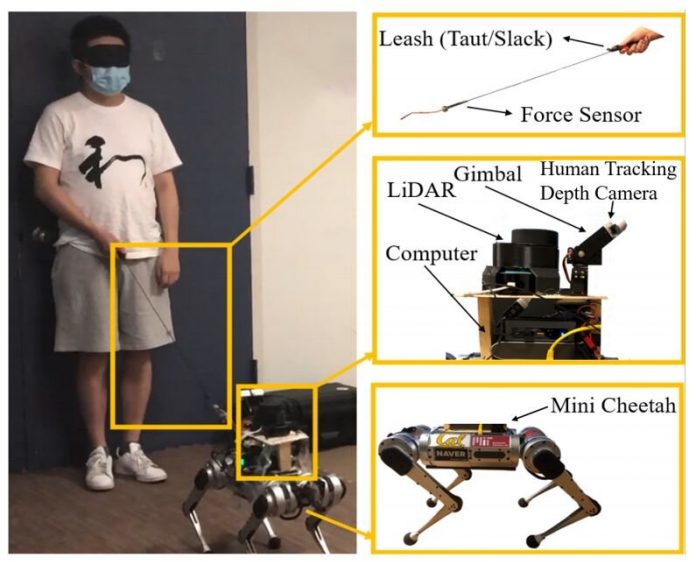

The researchers started with a robot made by Boston Dynamics called a mini cheetah. It is able to walk on four legs and comes equipped with lasers and cameras that allow it to map out nearby terrain. It also comes with a computer brain to use what it sees to walk around while avoiding collisions with objects and to walk a predetermined course. The researchers added a leash to the robot and a human tracking depth camera. The depth camera is needed to provide location information to the robot dog concerning the human that it is leading. The robot and the person work together to move from one location to the next. First, a map describing the path that the dog is to take is downloaded to the robot dog. The map also includes terrain details to help the pair get where they want to go. Then, finally, the human grabs hold of the leash and the pair begin walking.

Testing has shown that the robot dog is able to lead a person (a blindfolded person with sight) from a starting point to an ending point and that it can be done with both a taut and slack leash. More work will need to be done, however, before the robot dog is ready to lead a person who is blind in a real-world setting. The robot will need to be given a smoother and quieter gait, for example, and it will need to give the person it is leading more feedback as the walk unfolds.