The first question you may be asking is: What exactly is 5G? The second question may be: How is it architected differently to deliver speed, low latency, capacity, and numerous other benefits?

In this article, we will tackle the 5G architecture question. We will look at some of the capabilities made possible by 5G network architecture and how connected applications can benefit from it. You can find more resources in the links throughout this article and in the related resources in the footer. For a good basic 5G introduction, see the article, What Is 5G, Part 1. Our 5G overview continues in Part 2, Who Will Adopt 5G Technology, and When?

One thing is certain: Our connected world is changing. 5G, with its next-generation network architecture, has the potential to support thousands of new applications in both the consumer and industrial segments. The possibilities for 5G seem almost limitless when speed and throughput are exponentially higher than current networks.

These advanced capabilities will enable applications across vertical markets such as manufacturing, healthcare, and transportation, where 5G will play a major role in everything from advanced manufacturing automation to fully autonomous vehicles. In order to develop profitable business use cases and applications for 5G, it helps to have at least a general understanding of the 5G network architecture that lies at the heart of all these new applications.

5G has received an enormous amount of attention, and more than a little hype. While the potential is enormous, it’s important to know that the industry is still in its early stages of adoption. The process of deploying the 5G network started many years ago and involved building out the new infrastructure, most of which is funded by the major wireless carriers.

Full 5G deployment will take time, rolling out in major cities long before it can reach less populated areas. Digi supports our customers in preparing for 5G, with communications on migration planning and next generation products. While Digi is not directly involved in developing the 5G new radio (NR) core and 5G radio access network (RAN), Digi devices will be an integral part of the 5G vision and their use in a myriad of 5G applications.

5G Network Architecture

So – what exactly is 5G and how does 5G network technology architecture differ from previous “G’s”?

The 3GPP standards behind 5G network architecture were introduced by the 3rd Generation Partnership Project (3GPP), the organization that develops international standards for all mobile communications. The International Telecommunications Union (ITU) and its partners define the requirements and timeline for mobile communication systems, defining a new generation approximately every decade. The 3GPP develops specifications for those requirements in a series of releases.

The “G” in 5G stands for “generation.” 5G technology architecture presents significant advances beyond 4G LTE (long-term evolution) technology, which comes on the heels of 3G and 2G. As we describe in our related resource, The Journey to 5G, there is always a time period during which multiple network generations exist at once. Like its predecessors, 5G must co-exist with previous networks for two important reasons:

- Developing and deploying new network technologies takes an enormous amount of time, investment and collaboration of major entities and carriers.

- Early adopters will always want to get their hands on new technologies as quickly as possible, whereas those who have made major investments in large deployments with existing network technologies, such as 2G, 3G and 4G LTE, want to make use of those investments for as long as possible, and certainly until the new network is fully viable. (Note that 2G and 3G networks are being sunset to make room for 5G deployment. See our blog post 2G, 3G, 4G Network Shutdown Updates.)

The network architecture of 5g mobile technology improves vastly upon past architectures. Large cell-dense networks enable massive leaps in performance. And in addition, the architecture of 5G networks offers better security compared to today’s 4G LTE networks.

In summary, 5G technology offers three principle advantages:

- Faster data transmission speed, up to multi-Gigabit/s speeds.

- Greater capacity, fueling a massive amount of IoT devices per square kilometer.

- Lower latency, down to single-digit milliseconds, which is critically important in applications such as connected vehicles in ITS applicationsand autonomous vehicles, where near instantaneous response is necessary.

Does this mean that 5G is fully ready today? And does it mean 5G architecture is right for all applications? Read on to see how the new technology supports key applications, and which applications are more suited to 4G LTE.

5G Design and Planning Considerations

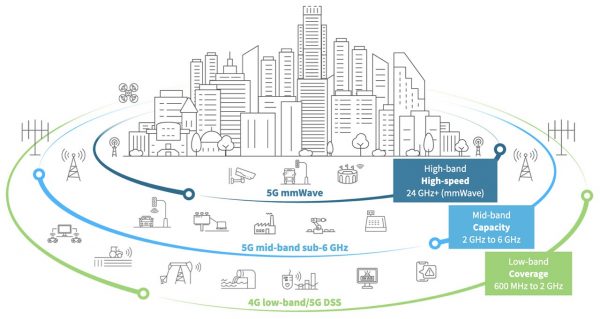

The design considerations for a 5G network architecture that supports highly demanding applications is complex. For example, there is no one-size-fits all approach; the range of applications requires data to travel distances, large data volumes, or some combination. So 5G architecture must support low, mid and high-band spectrum – from licensed, shared and private sources – to deliver the full 5G vision.

For this reason, 5G is architected to run on radio frequencies ranging from sub 1 GHz to extremely high frequencies, called “millimeter wave” (or mmWave). The lower the frequency, the farther the signal can travel. The higher the frequency, the more data it can carry.

There are three frequency bands at the core of 5G networks:

- 5G high-band (mmWave) delivers the highest frequencies of 5G. These range from 24 GHz to approximately 100 GHz. Because high frequencies cannot easily move through obstacles, high-band 5G is short range by nature. Moreover, mmWave coverage is limited and requires more cellular infrastructure.

- 5G mid-band operates in the 2-6 GHz range and provides a capacity layer for urban and suburban areas. This frequency band has peak rates in the hundreds of Mbps.

- 5G low-band operates below 2 GHz and provides a broad coverage. This band uses spectrum that is available and in use today for 4G LTE, essentially providing an LTE 5g architecture for 5G devices that are ready now. Performance of low-band 5G is therefore similar to 4G LTE, and supports use for 5G devices on the market today.

In addition to spectrum availability and application requirements for distance vs. bandwidth considerations, operators must consider the power requirements of 5G, as the typical 5G base station design demands over twice the amount of power of a 4G base station.

Considerations for Planning and Deploying 5G Applications

Systems integrators, and those developing and deploying 5G applications for the verticals we’ve discussed, will find that it is important to consider trade-offs. (Our video, 5 Factors to Guide Your Preparation for 5G, is a great resource.)

For example, here are examples of some of the key considerations:

- Where will your application be deployed? Applications that are optimized for mmWave will not operate as expected within buildings and when extended range is required. Optimal use cases include 5G cellular telecommunications in the 24- to 39-GHz bands, police radar in the Ka-band (33.4- to 36.0-GHz), scanners in airport security, short-range radar in military vehicles and automated weapons on naval ships to detect and take down missiles.

- What kind of throughput will be required? For autonomous vehicles and intelligent transportation systems (ITS) applications, the devices and connectivity must be optimized for speed. Near real time communications – measured in millionths of a second – are critical for vehicles and devices to “make decisions” on turning, accelerating and braking, and the lowest possible latency is mission critical for these applications.

- Video and VR applications, by contrast, must be optimized for throughput. Video applications such as medical imaging can ultimately take full advantage of the massive amounts of data that 5G networks can support.

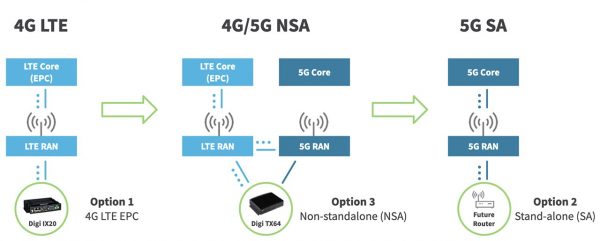

For 5G to deliver its full vision, the network infrastructure needs to evolve as well. The following diagram illustrates the migration over time, as well as Digi’s 5G product plans.

The earliest uses of 5G technology will not be exclusively 5G but will appear in applications where connectivity is shared with existing 4G LTE in what is called non-standalone (NSA) mode. When operating in this mode, a device will first connect to the 4G LTE network, and if 5G is available, the device will be able to use it for additional bandwidth. For example, a device connecting in 5G NSA mode could get 200 Mbps of downlink speed over 4G LTE and another 600 Mbps over 5G at the same time, for an aggregate speed of 800 Mbps.

As more and more 5G network infrastructure goes online over the next several years, it will evolve to enable 5G-only stand-alone mode (SA). This will bring the low latency and ability to connect with massive numbers of IoT devices that are among the primary advantages of 5G.

Core Network

In this section we will provide a 5G core architecture overview and describe the 5G core components. We will also show how 5G architecture compares to the current 4G architecture.

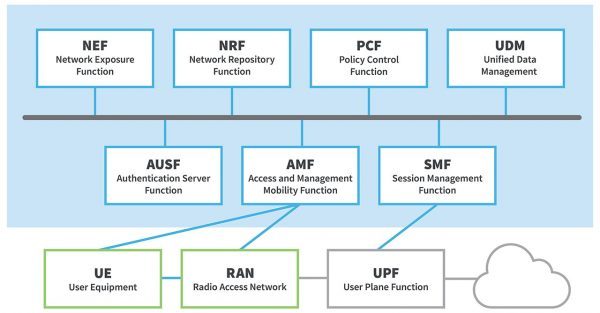

The 5G core network, which enables the advanced functionality of 5G networks, is one of three primary components of the 5G System, also known as 5GS (source). The other two components are 5G Access network (5G-AN) and User Equipment (UE). The 5G core uses a cloud-aligned service-based architecture (SBA) to support authentication, security, session management and aggregation of traffic from connected devices, all of which requires the complex interconnection of network functions, as shown in the 5G core diagram.

The components of the 5G core architecture include:

- User plane Function (UPF)

- Data network (DN), e.g. operator services, Internet access or 3rd party services

- Core Access and Mobility Management Function (AMF)

- Authentication Server Function (AUSF)

- Session Management Function (SMF)

- Network Slice Selection Function (NSSF)

- Network Exposure Function (NEF)

- NF Repository Function (NRF)

- Policy Control function (PCF)

- Unified Data Management (UDM)

- Application Function (AF)

The 5G network architecture diagram below illustrates how these components are associated.

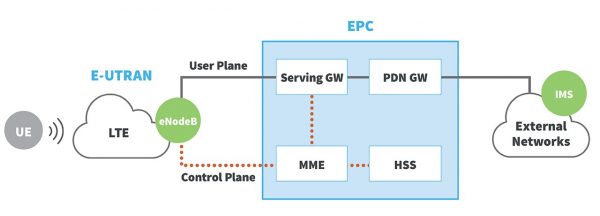

4G Architecture Diagram

When 4G evolved from its 3G predecessor, only small incremental changes were made to the network architecture. The following 4G network architecture diagram shows the key components of a 4G core network:

Source: 3GPP

In the 4G network architecture, User Equipment (UE) like smartphones or cellular devices, connects over the LTE Radio Access Network (E-UTRAN) to the Evolved Packet Core (EPC) and then further to External Networks, like the Internet. The Evolved NodeB (eNodeB) separates the user data traffic (user plane) from the network’s management data traffic (control plane) and feeds both separately into the EPC.

5G Architecture Diagram

5G was designed from the ground up, and network functions are split up by service. That is why this architecture is also called 5G core Service-Based Architecture (SBA). The following 5G network topology diagram shows the key components of a 5G core network:

Source: Techplayon

Here’s how it works:

- User Equipment (UE) like 5G smartphones or 5G cellular devices connect over the 5G New Radio Access Network to the 5G core and further to Data Networks (DN), like the Internet.

- The Access and Mobility Management Function (AMF) acts as a single-entry point for the UE connection.

- Based on the service requested by the UE, the AMF selects the respective Session Management Function (SMF) for managing the user session.

- The User Plane Function (UPF) transports the IP data traffic (user plane) between the User Equipment (UE) and the external networks.

- The Authentication Server Function (AUSF) allows the AMF to authenticate the UE and access services of the 5G core.

- Other functions like the Session Management Function (SMF), the Policy Control Function (PCF), the Application Function (AF) and the Unified Data Management (UDM) function provide the policy control framework, applying policy decisions and accessing subscription information, to govern the network behavior.

As you can see, the 5G network architecture is more complex behind the scenes, but this complexity is needed to provide better service that can be tailored to the broad range of 5G use cases.

Difference between 4G and 5G Network Architecture

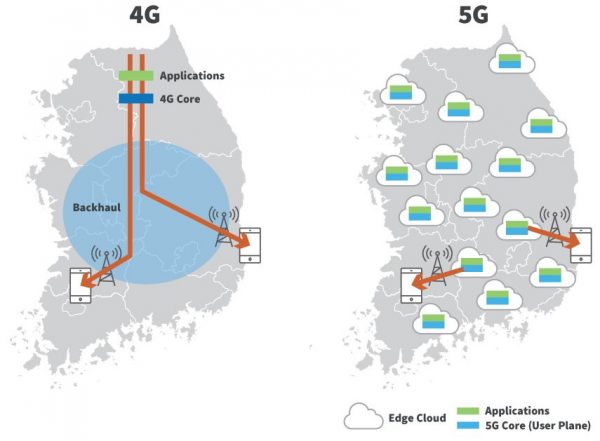

In this section, we’ll discuss how 4G and 5G architectures differ. In a 4G LTE network architecture, the LTE RAN and eNodeB are typically close together, often at the base or near the cell tower running on specialized hardware. The monolithic EPC on the other hand is often centralized and further away from the eNodeB. This architecture makes high-speed, low-latency end-to-end communication challenging to impossible.

As standards bodies like 3GPP and infrastructure vendors like Nokia and Ericsson architected the 5G New Radio (5G-NR) core, they broke apart the monolithic EPC and implemented each function so that it can run independently from each other on common, off-the-shelf server hardware. This allows the 5G core to become decentralized 5G nodes and very flexible. For example, 5G core functions can now be co-located with applications in an edge datacenter, making communication paths short and thus improving end-to-end speed and latency.

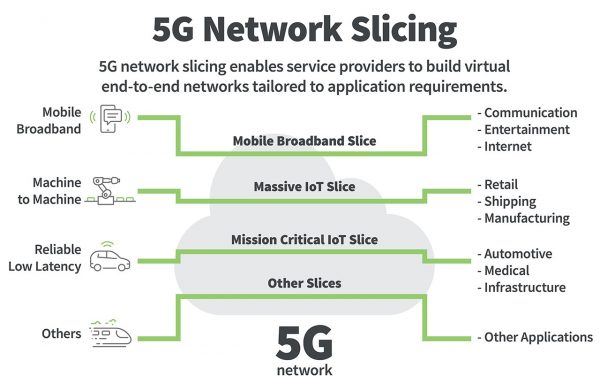

Another benefit of these smaller, more specialized 5G core components running on common hardware is that networks now can be customized through network slicing. Network slicing allows you to have multiple logical “slices” of functionality optimized for specific use-cases, all operating on a single physical core within the 5G network infrastructure.

A 5G network operator may offer one slice that is optimized for high bandwidth applications, another slice that’s more optimized for low latency, and a third that’s optimized for a massive number of IoT devices. Depending on this optimization, some of the 5G core functions may not be available at all. For example, if you are only servicing IoT devices, you would not need the voice function that is necessary for mobile phones. And because not every slice must have exactly the same capabilities, the available computing power is used more efficiently.

Source: SDX Central

The Evolution of 5G

Every generation or “G” of wireless communication takes approximately a decade to mature. The switch from one generation to the next is mainly driven by the operators’ need to reuse or repurpose the limited amount of available spectrum. Each new generation has more spectral efficiency, which makes it possible to transmit data faster and more effectively over the network.

The first generation of wireless communication, or 1G, started back in the 1980s with analog technology. This was followed quickly by 2G, the first network generation to use digital technology. The growth of 1G and 2G was initially driven by the market for mobile phone handsets. 2G also offered data communication, but at very low speeds.

The next generation, 3G, began ramping up in the early 2000s. The growth of 3G was driven by handsets again, but was the first technology to offer data speeds in the 1 Megabit per second (Mbps) range, suitable for a variety of new applications both on smartphones and for the emerging Internet of Things (IoT) ecosystem. Our current generation of wireless technology 4G LTE, began ramping up in 2010.

It’s important to note that 4G LTE (Long Term Evolution) has a long life ahead; it is a very successful and mature technology and is expected to be in wide use for at least another decade.

5G Architecture and the Cloud and the Edge

Let’s talk about edge computing within the 5G network architecture.

One more concept that distinguishes 5G network architecture from its 4G predecessor is that of edge computing or mobile edge compute. In this scenario, you can have small data centers positioned at the edge of the network, close to where the cell towers are. That’s very important for very low latency and for high bandwidth applications that are carrying the same content.

For a high bandwidth example, think of video streaming services. The content originates in a server that’s sitting somewhere in the cloud. If people are connected to a cell tower and let’s say, 100 people are streaming a popular TV program, it’s more efficient to have that content as close to the consumer as possible, right there on the edge, ideally on the cell tower.

The user streams this content from a storage media that is on the edge rather than having to stream and transfer this information and backhaul it for 100 people from the central location on the cloud. Instead, using the 5G structure, you can bring to content to the tower just once and then distribute it out to your 100 subscribers.

The same principle applies in applications requiring two-way communication where low latency is needed. If a user has an application running at the edge, then the turnaround time is much faster because the data doesn’t have to traverse the network.

In the 5G network structure, these edge networks can also be used for services that are provided on the edge. Since it’s possible to virtualize these 5G core functions, you could have them running on a standard server or data center hardware and have fiber running to the radio that sends out the signal. So the radio is specialized, but everything else is pretty standard.

Today, 4G LTE is still growing. It provides excellent speed and sufficient bandwidth to support most IoT applications today. 4G LTE and 5G networks will co-exist over the next decade, as applications begin to migrate and then 5G networks and applications eventually supersede 4G LTE.

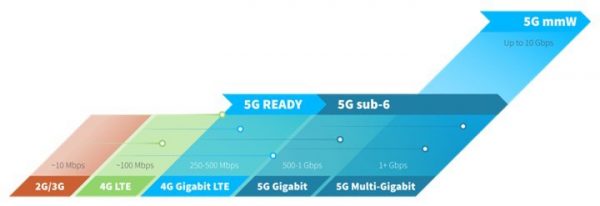

Devices Using 5G

5G will evolve over time, and 5G devices will follow suit. Early products will be “5G-ready”, which means that these products have the processing power and Gigabit Ethernet ports needed to support the higher bandwidth 5G modems and 5G extenders now on the horizon.

Later 5G products will have 5G modems directly integrated and have a faster multi-core processor, 2.5 or even 10 Gigabit Ethernet interfaces and Wi-Fi 6/6E radios. These product changes will drive the cost of 5G products up but are required to handle the additional speed and lower latency that 5G networks will offer.