Even if you are not consumed by wireless technology, it is hard to miss 5G. Naturally, it was the biggest topic at Mobile World Congress earlier this year but has also been covered by major television networks, and in countless influential news vehicles online and in print. Before 4G (LTE) appeared, there was a high level of media attention, as well. However, 5G is at least five years away. Why all the attention?

What Ever Happened to LTE-Advanced?

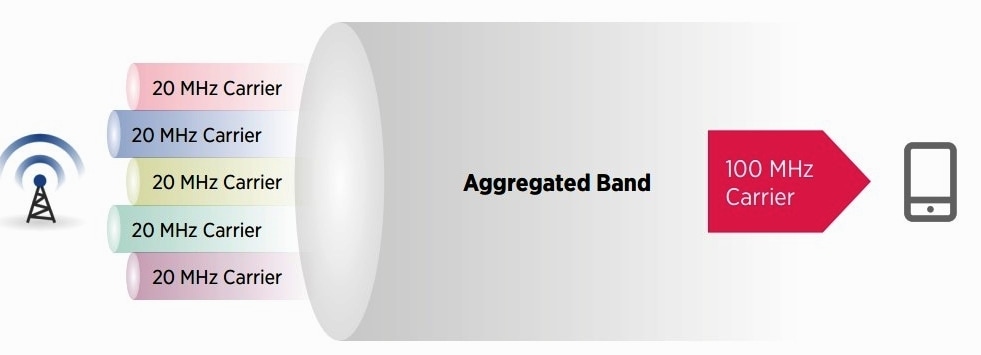

LTE-Advanced has been drowned out by the noise level from 5G but is nevertheless being deployed, but with far less fanfare than is typical of this industry. There was a time when 3.5G bridged the gap between 3G and 4G, in which passable data rates emerged. Consider LTE-Advanced as 4.5G, a half step between LTE and 5G that increases theoretical data rates and spectral efficiency, handles more concurrent user traffic and delivers better performance at the edges of cell sites’ coverage areas, as well as some other things that help pave the road ahead. It also ushers in carrier aggregation, Multi-Input Multi-Output (MIMO), and relay nodes. Carrier aggregation (Figure 1) battles the carriers’ insatiable addition to bandwidth by combining carriers (channels) at nearby or even very different frequencies to produce greater bandwidth.

MIMO, which you may already know about if you have an IEEE 802.n or IEE 802.11ac Wi-Fi router sprouting multiple antennas, increases data rates by transmitting and receiving two or more data streams on multiple antennas, a technique called spatial multiplexing. Carrier aggregation and MIMO got started with LTE but are enhanced in LTE-Advanced. Finally, LTE-Advanced makes better use of small base stations called small cells that fill in the coverage area. The first LTE-Advanced network was turned on by SK Telecom in South Korea in August 2015 and is being deployed in the United States. Smartphones with LTE-Advanced include Apple’s latest-generation iPhones, most Samsung smartphones, as well as phones from LG, Microsoft/Nokia, Motorola (now Lenovo), Huawei, and Blackberry. Next, get used to hearing 5G called “IMT-2020,” as it was formally named by the International Telecommunication Union (ITU). The ITU is a UN agency that oversees and coordinates worldwide communications. However, most people still call it 5G.

To Infinity and… Stay Tuned

Now that the 5G global marketing machine is running at full tilt, we are going to hear about how it will be the advancement that connects everything that needs connecting. Whether or not we realize this promise remains to be seen. The full 5G dream, as it’s pitched in the media today, is doubtful, since the laws of physics constrain ultimate results. Moreover, much of what 5G will attempt to accomplish relies extensively on pushing the science envelope, which brings up real questions about whether some goals can be realized.

Nevertheless, 5G is not only the next incremental increase in download and upload speeds but rather, as Intel states it, “an end-to-end ecosystem that enables a fully mobile and connected society.” It will rely on both licensed and unlicensed spectrum, modularity, software-defined cloud-based networks, and dynamically allocated resources. This will mandate an impressive change to the way wireless networks are constructed and orchestrated, the frequencies at which they operate, dramatic reductions in latency (the round-trip time to and from the user and whatever he or she is communicating with), and an expansion of the types of devices to which these networks will be connected.

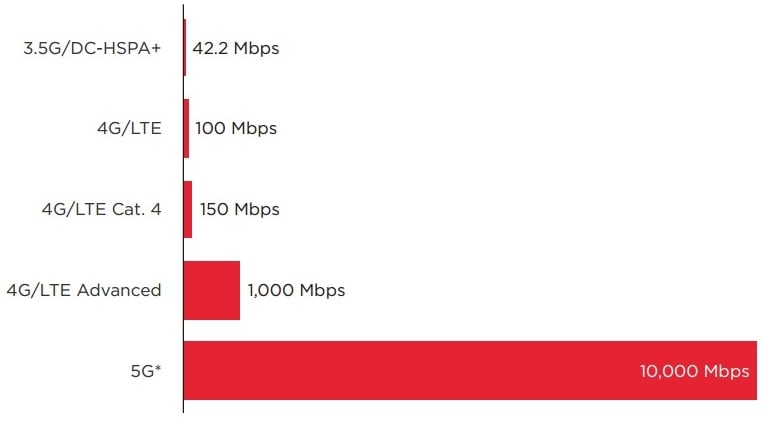

Other goals include minimum theoretical data rates of 10 Gb/s (Figure 2), a 1000-times improvement in bandwidth per unit area, an increase of between 10 and 100 times the number of simultaneously connected devices, a 90% reduction in annual network electricity use, and the ability to enable tiny IoT devices to function with battery lives up to 10 years. And although theoretical data rates could be as high as 10 Gb/s, in practice they will be much less. Even so, a data rate of “even” 500 Mb/s would exceed ten times what most users experience today and would be faster than anyone has in his or her home.

This tantalizing prospect means that 5G could compete with cable and fiber for entertainment and broadband delivery as well as several others applications, including pure cloud-based enterprise computing environments. Represented within 5G is the need to power and enable communications for the billions of machine-to-machine (that is, IoT) devices that analysts claim will be in operation by 2020, which neatly coincides with when 5G may start to be deployed.

Much of what is claimed to be exclusive to 5G can also be achieved with existing technology, which leaves two features that truly define and are unique to 5G: extremely low latency and data rates greater than 1 Gb/s. Of the two, latency is far and away the most technologically difficult challenge, and if it cannot be reduced to levels below 1 ms, some capabilities simply may not be achievable and will drop out of the standards.

Instant Response Times?

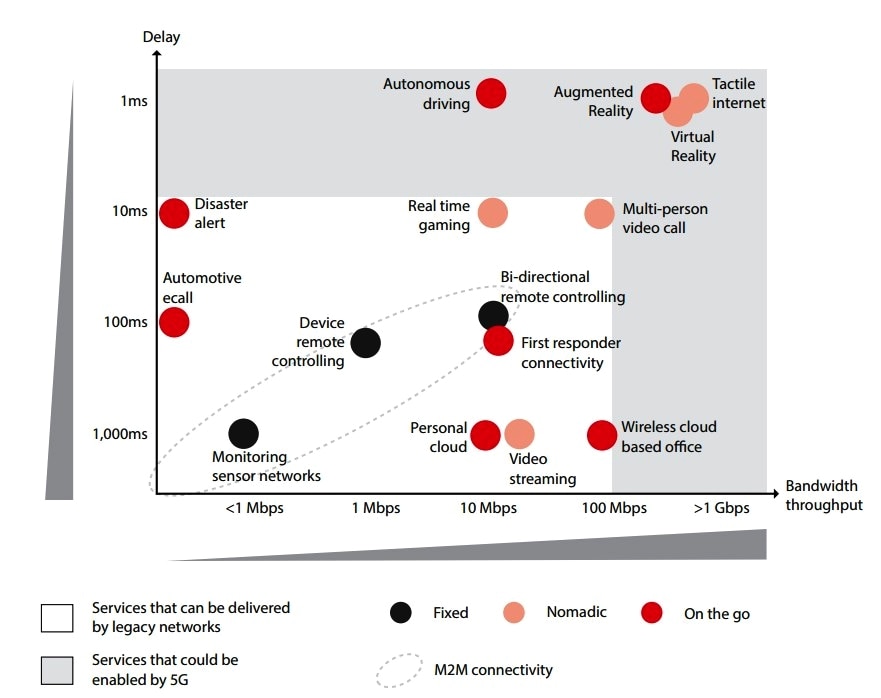

For most of us, latency is not all that important as it does not interfere with the comparatively mundane activities that we all perform, like web browsing, watching videos, email, and “casually” playing games. “Serious” gamers are a different story. This is nicely defined in Figure 3, created by the GSMA, which plots data rates versus latency. LTE is limited to a latency to about 10 ms, and as you can see all the applications in the white area fall within its (theoretical) capabilities. Emerging ones such as autonomous vehicles, virtual and augmented reality, and Tactile Internet do not.

As for Tactile Internet, don’t feel badly if you’ve never heard the term before as it represents the “hairy edge” of achievable latency at 1ms or less (the round-trip time to and from the user and whatever he or she is communicating with), so for practical purposes it is simply not achievable now. Typical applications requiring Tactile Internet include precise human-to-machine and machine-to-machine interaction in industry, robotics and telepresence, virtual reality, augmented reality, healthcare, traffic safety, and serious gaming.

Reducing latency to less than 1 ms round-trip is one of the most important tenets within 5G, as it is absolutely critical for some applications for which virtually instantaneous response times are not just desirable, but essential. For example, any application in which safety is a primary issue requires this capability, the most obvious being vehicle autonomy but extends also to medical, robotics, virtual and augmented reality, as well as some machine-to-machine interactions.

Latency requirements are dictated by the response capabilities of people. That is, they must meet or exceed the speed at which humans can respond. For example, when someone reacts to a sudden, unforeseen event, the time-lag between sensing it and responding to it is about 1 second. For web browsing, to achieve immediacy, the page view after clicking on a link should be a fraction of a person’s reaction time, which is a few hundred milliseconds. When we’re prepared for an event, even faster reaction times are required – about 100 ms. Modern voice communications systems are designed to ensure that voice is transmitted within this timeframe because greater latency is annoying. Our visual reaction time is about 10 ms to effect a pleasant video experience, and modern televisions have a picture refresh rate of at least 100 Hz, which translates into a maximum latency of 10 ms.

However, when we’re expecting a fast response, as in serious gaming when controlling a visual scene and issuing commands that anticipate rapid response, or in moving our heads while wearing virtual reality goggles, a reaction from the display of 1 ms is required. Achieving latency of less than 1 ms will be exceptionally difficult, regardless of how many advances are made between now and 2020 in signal processing and network design. Simply put, the laws of physics dictate how fast signals can travel through various media, notwithstanding any bottlenecks that occur within a network. Practically speaking this might mean that in order to approach the 1ms latency benchmark it may only be possible over very short distances between the source of the content and the end-user.

According to some studies, this is less than one mile, which seems highly unlikely with current wireless infrastructure, and would require massive numbers of small cells. One way to potentially mitigate this problem is by mandating the use of a single network infrastructure used by every service provider so that every user would access the same content source via a radio system. Of course, it would also require cooperation between competing carriers, too. If sub-1-ms latency cannot be achieved, what will become of vehicle autonomy, virtual reality, and all other applications requiring instantaneous response times? Excepting excellent transmission and very short distances, this is a question that today no one can answer. Keep in mind that wireless transmission is extremely fast; in the securities industry, anything less than an instantaneous response is money lost, which is why the industry is broadly employing point-to-point microwave links to transfer data because optical fiber is too slow. So don’t give up hope yet.

The Quest for Bandwidth

Wireless carriers desperately need room to grow, and 5G provides it in spades, a major advancement as there is precious little left in the spectrum between 700 MHz and about 2.6 GHz, where all networks are currently “located.” But the devil is in the details, because while 5G opens up higher frequencies (in which there are few other occupants) and thus provides much greater opportunities for expansion, these frequencies have characteristics that make them far less suited for wide-area networks like cellular systems. This is directly related to signal propagation characteristics that are significantly different from those at lower frequencies. In practical terms, this means it costs more to operate at higher frequencies, as more infrastructure is required when signals must travel shorter distances, and equipment is expensive to build. For these reasons, it is likely that 5G systems will first be deployed at frequencies around 6 GHz. When the swath of spectrum around 6GHz becomes saturated, they will gradually move higher, eventually well into the millimeter-wave region where achieving reliable communications over reasonable distances becomes an enormous challenge. At the very least, new transceivers, antennas, and other expensive hardware will be necessary, as will techniques such as massive MIMO and beamforming to obtain high quality of service and “five nines” (99.999%) reliability under any operational scenario.

Samsung has conducted studies focusing on the challenges of communications at frequencies in the millimeter-wave range, which they released in a report to the Federal Communications Commission in the U.S. While Samsung engineers proved that it is indeed possible to use these frequencies, the conditions during the test used line-of-sight transmission paths and even then, obstructions and penetration within buildings was problematic. However, the goal of the tests was to establish proof of concept and to verify that these frequencies can be used. By the time they are needed, many of the hurdles may be overcome.

New Network Architectures

One of the critical requirements of 5G is the need to design open network architectures because they are not constructed using proprietary hardware. Proprietary hardware cannot accommodate scaling up to the necessary extent, are challenging and expensive to maintain, and more resistant to insertion of new technology. New network architectures will be implemented through techniques such as Network Function Virtualization (NFV) and Software-Defined Networking (SDN). NFV effectively moves functionality into the cloud, functionality that was formerly performed by local hardware, while SDN makes the network highly programmable by separating the control plane from the data plane. SDN makes it possible to dynamically optimize how services are delivered, which many current network architectures cannot adequately achieve.

As in so many other industries, the key to success will be extensive use of the open source approach, in which all developers and solution providers have a unified way to implement their solutions much faster and at a lower cost. This alone is a big departure from present-day practices. Considering that 5G is hoped to be operational early within the next decade, there is obviously an enormous amount of work to be done. However, the benefits of this approach have huge positive implications over the long term, eliminating the “stovepipe system” approaches that, thus far, have been the norm in the wireless industry.

For more information, visit www.mouser.in