Martin Lass | Product Marketing Manager | Infineon Technologies

En route towards autonomous driving, it is imperative to gather all the information about what is happening both inside and around the car. Besides driver state monitoring, capturing 3D data inside a car facilitates entirely new human machine interface (HMI) concepts.

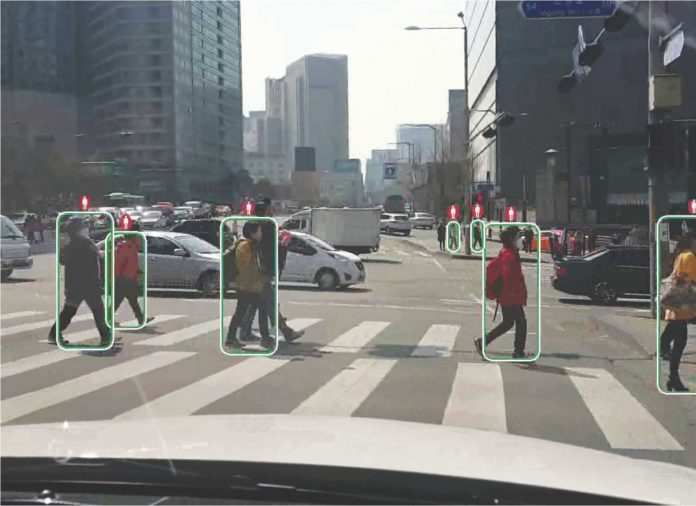

In advanced driver assistance systems and en route towards self-driving cars, precise information about the driver’s attentiveness as well as the situation inside the vehicle is an essential requirement. Using a sophisticated 3D camera, the car captures the driver’s behaviour and forwards this information to the advanced driver assistance system. If the driver closes their eyes or is not looking straight ahead, it may then trigger an alarm. If the driver fails to respond, it activates the emergency brake assist. At the same time, the 3D camera chip delivers the directly measured depth data, without having to first calculate these from the angle information, as is necessary for example with a stereo camera, requiring high computing input. The image sensor REAL3 from Infineon makes it possible to realize a system for 3D vision in the smallest of installation spaces – for both indoor and outdoor applications.

Scalable portfolio

Infineon serves a wide range of applications with its 3D image sensors. In addition to the variants originally introduced for consumer applications, solutions for industry and automotive electronics will also be offered in future. By way of example, corresponding REAL3 image sensors are already being used in the form of bare dies in the Google ATAP project Tango.The Tango project includes smartphones and tablets equipped with additional sensors and cameras that scan the environment in 3D in order to facilitate for example indoor navigation and augmented reality applications. For automotive applications, Infineon is working on versions with an extended temperature range, a robust package and AEC-Q100 qualification.

Various technologies have been developed for capturing 3D information. With stereo vision, for example, two standard 2D cameras record the scene from various angles and thus calculate the distance (depth). This method offers the advantage that low-cost standard image sensors can be used. However, time-consuming physical adjustment and calibration is required. What is more, highly complex and computationally-intensive algorithms are necessary. Finally, the method is limited in poor and changing lighting conditions.

Another 3D technology uses structured light – i.e. a known pattern is projected onto the scene and the depth calculated from the pattern’s distribution. Although this method offers advantages in the case of multi-path interferences, it does however require a high-tech camera with special active illumination in addition to precise and stable mechanical adjustment between the lens and the pattern projector. The input required for calibration is accordingly also very high. In addition, the reflected patterns are sensitive to optical interferences and textures.

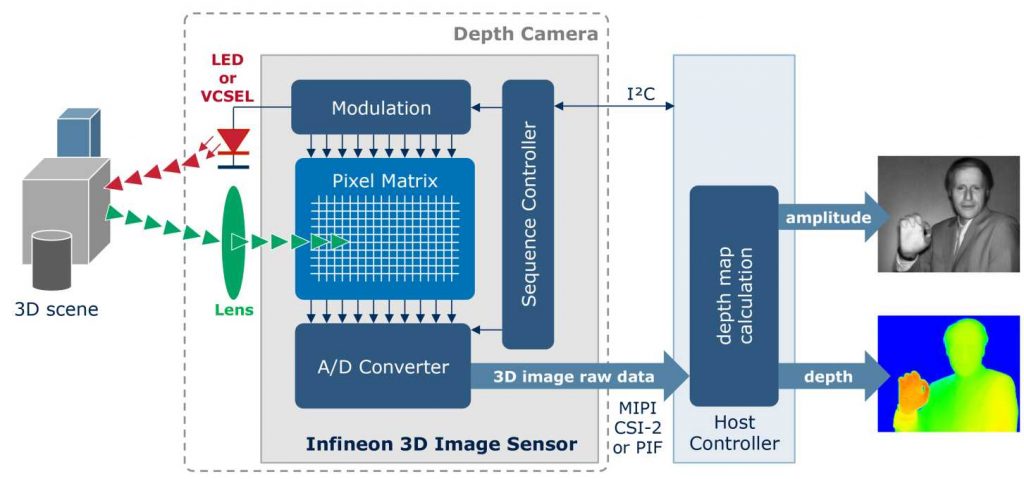

Measuring image depth and amplitude with every pixel

A compact, precise and versatile solution for 3D image capture is presented by image sensors on the basis of time-of-flight (ToF) imaging, such as the image sensor REAL3 from Infineon (Fig. 1).These measure the phase displacement between a modulated illumination source and the reflection, which they convert into distance information. With a corresponding ToF camera, the scene is actively illuminated with amplitude-modulated infrared light, and the camera and/or image sensor measures the phase displacement for every pixel, calculated from the time the light takes to reach the object and return. As such, the camera delivers for every pixel the depth and amplitude of the object depicted. Further advantages of the ToF method include compact dimensions of the camera, operation in all lighting conditions and simple calibration.

The ToF technology is highly scalable. This means that cameras can be used within a focus range of just a few centimetres up to 20 meters and above, providing correspondingly high-powered illumination is available. A depth resolution of around 1% of the respective distance is typical, the lateral resolutions currently reach up to 352 × 288 pixels and the cameras deliver up to 100 images per second. A ToF camera generally consists of the following components: illumination unit, optical system, sensor, control electronics and evaluation stage (Fig. 2).

The illumination unit uses either LEDs or laser diodes, which can be modulated with sufficient speed (LEDs e.g. up to 30MHz) to enable the sensor to measure the time off light perfectly. The illumination unit mostly transmits in the near-infrared range, thus minimizing any disturbance of the environment by the camera.

An optical system (lens) gathers the light reflected from the environment back in and maps the scene on the sensor. An optical band-pass filter only allows the wavelength through with which the illumination unit also operates.This helps to eliminate a significant portion of the background light interference.

The heart of the ToF camera is the 3D image sensor (Fig. 2), which measures the depth and amplitude value for each pixel.The illumination unit and sensor have to be controlled with a sophisticated electronics system to achieve the highest possible level of precision.The image sensor REAL3 already incorporates these electronics.

To facilitate high-performance image recognition, besides the camera system optimized for the specific application, a corresponding computing unit is required for handling the data as well as software (middleware) for processing the 3D data (e.g. for gesture recognition).

Despite the fact that most of the background light is already suppressed by optical filters, the pixels still have to cope with very large depth dynamic range.Manufacturers have devised different strategies for their sensors to allow this background signal to be largely suppressed.

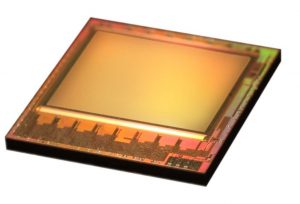

Highly integrated 3D ToF image sensors

Infineon has partnered with the company PMD Technologies from Siegen, Germany, in developing high-performance and highly integrated 3D ToF camerachips.The main contribution from PMD Technologies to the chip family is the ToF pixel matrix featuring patented suppression of background illumination (SBI). SBI extends the dynamic capabilities of the sensor chip and helps to prevent saturationin strong ambient light, for example during outdoor operation in direct sunlight.The sensor with SBI circuitry can be operated in a correspondingly designed camera system in bright sunlight of 150 klx. The 3D image sensors combine the high-performance ToF pixel matrix with optimised, volume-proven CMOS manufacturing technology and system-on-chip (SoC) integration. This has allowed the photo sensitive area to be integrated along with mixed signal circuitry on a compact chip.The image sensors REAL3 offer what is currently the highest possible integration of various functionalities: photosensitive pixel array, smart control logic, A/D converter, pixel matrix modulation, autonomous phase sequencing and interfaces for digital output.

This in turn presents numerous advantages.The optimised CMOS technology permits high sensitivity in the infrared range.The patented SBI circuitry for every pixel ensures reliable operation in all lighting conditions, be this in bright sunlight or darkness, or inside and outside buildings and/or vehicles.The direct measurement of depth and amplitude in every pixel results in less intensive calculations. Featuring a standard camera interface, the ToF single chip is easy to integrate.Thanks to the monocular camera system architecture, there is no need for a mechanical baseline, the illumination unit can be positioned independently of the sensor and, what is more, no mechanical adjustment or angle correction is necessary. Neither is there any need for recalibration because of vibration or thermal influences.

The image sensors REAL3 make the rapid readout of data within 1ms to 4ms possible and support frame rates of 100 fps (frames per second) and higher.The image sensors are also dynamically configurable via the I²C interface.This makes it possible to simply adjust the frame rate along with the number of phase measurements and exposure time during operation, which in turn facilitates a functionality optimised in line with the lighting and operating conditions coupled with minimised power usage. In addition, a smart power management system ensures low power consumption.

Infineon’s cooperation partner PMD Technologies has also devised a method for calibrating the image sensors quickly and reliably at end of the manufacturing process.The one-off calibration takes less than 10s per ToF camera –a key requirement for volume production.

3D image sensors for automotive applications

The image sensors REAL3 allow the production of ultra-compact, high-precision monocular 3D camera systems. Systems such as these can be used for applications involving gesture recognition in computers, home electronics devices, not to mention in both industrial HMI and innovative automotive applications.

The use of 3D image sensors in cars boosts safety and convenience for drivers. En route towards autonomous driving and to even greater safety, it is imperative to acquire all the information about what is happening both inside and around the car. In addition to driver state monitoring mentioned earlier, the capturing of 3D data inside the car enables completely new and less distracting HMI concepts. A 3D camera can for example determine the exact position of the driver’s head.This information can be used to optimise the visibility of head-up displays or to depict the projections for augmented reality applications irrespective of the head position always realistically and seamlessly in the real environment. Touch less gesture control of infotainment, navigation and HVAC systems is also supported. Not to mention passenger classification for pre-setting corresponding preferences, such as the seat position, mirror adjustment, airbag triggering force, etc. Compact and high-performance 3D cameras are also key components for the all-round vision of parking aids and for the detection of obstacles and more generally for other aspects of autonomous driving.

Important criteria for their use in cars include the high robustness to environmental influences, the compact dimensions, low design complexity and the high, also dynamically configurable scalability of the REAL3 image sensors. Added to this is the low computing input compared with other 3D technologies, with correspondingly less CPU power being required.

Products, reference designs and demonstrators

The image sensorfamily of REAL3 currently comprises two members: IRS1010C with a lateral resolution of 160 x 120 pixels (QQVGA) and IRS1020C with 352 x 288 pixels (CIF), with the number of pixels used being freely selectable via software.The chips qualified for the consumer electronics market are already shipping in initial volumes as bare dies. Besides other variants for consumer applications, an image sensor generation is currently being developed in an optical BGA package for the automotive market.The package is qualified according to AEC-Q100, measures 10mm x 10mm and is designed for an ambient temperature of -40°C to 105°C.

In addition to the 3D sensor chips, the new Cam Board pic of lexx (Fig. 3) is also available as a reference design for 3D cameras.The camera developed by PMD Technologies with a resolution of 224 x 172 pixelsis powered via a USB2.0 port and is basedon the very latest 3D image sensor chip from Infineon. Measuring just 68mm x 17mm x 7.25mm, it is the smallest depth sensor camera available on the market. The Cam Board pic of lexx uses an infrared laser as an illumination unit and can be employed flexibly at various frame rates for a range of up to 4m with an average power consumption of 300mW for the 3D image sensor chip and illumination unit.

On the basis of the image sensor REAL3, the company Kostal has also developed a 3D camera (Fig. 4) for driver state monitoring as production-quality prototypes with integrated image processing. The camera is capable of capturing the driver’s facial contour robustly via 49 reference points even in widely varying lighting conditions. In conjunction with the 3D depth data, this enables the head position (x, y and z direction), head orientation (yaw, pitch and roll angle) and eyelid closure to be detected. The camera supports a resolution of 352 x 288 pixels (CIF), a frame rate of up to 50 fps and scans objects at a distance of up to 1.5m with a depth resolution of up to 1cm.

About the author: Martin Lass is Product Marketing Manager with Infineon Technologies AG in Neubiberg, near Munich, Germany. He is responsible for the 3D image sensor family REAL3.