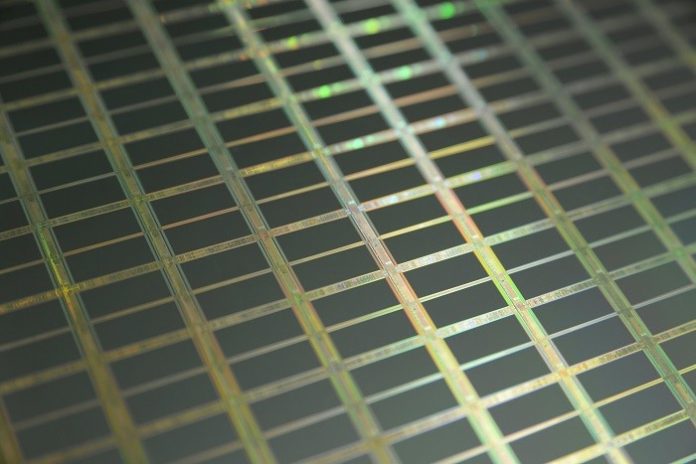

Researchers at The Rockefeller University have shed new light on Moore’s Law—perhaps the world’s most famous technological prediction—that chip density, or the number of components on an integrated circuit, would double every two years.

The study published by PLOS ONE reveals a more nuanced historical wave pattern to the rise of transistor density in the silicon chips that make computers and other high-tech devices ever faster and more powerful.

In fact, since 1959, there have been six waves of such improvements, each lasting about six years, during each of which transistor density per chip increased at least 10-fold, according to the paper, “Moore’s Law Revisited through Intel Chip Density.” The paper builds on an earlier study of DRAM chips as model organisms for the study of technological evolution.

The new work clarified the arcs of the wave pattern by adopting a novel perspective on chip density, factoring out the changing size of chips used in Fairchild Semiconductor International and Intel Processors starting in 1959.

After each six-year growth wave episode, about three years of negligible growth followed, according to authors Jesse Ausubel and David Burg of the Program for the Human Environment (PHE) at The Rockefeller University, New York.

The next growth spurt in transistor miniaturization and computing capability is now overdue, they say.

And it will be pulled by demand for e.g. data-hungry artificial intelligence technologies like facial recognition, 5G cellular networks and equipment, self-driving cars, and similar high-tech innovations requiring ever greater processing speed and computing capability.

A startup company, Cerebras, has touted the largest chip ever built, the Wafer-Scale Engine, 56 times the size of the largest graphical processing unit (GPU), which has dominated computing platforms for AI and machine learning.

“The wafer-scale chip has 1.2 trillion transistors, embeds 400,000 AI-optimized cores (78 times more than the largest GPU), and has 3,000 times more on-chip memory.”

However, the end of the silicon chip era is in view, with only one or two silicon pulses left before further advances become exponentially more difficult due to physical realities and economic limitations, they say.

Continued growth of the computer industry will depend on such miniaturized innovations as nano-transistors, single-atom transistors, and quantum computing.

In 2019, the paper notes, Google parent company Alphabet claimed a breakthrough in quantum computing with a programmable supercomputing processor named “Sycamore” using programmable superconducting qubits.

“The published benchmarking example reported that in about 200 seconds Sycamore completed a task that would take a current state-of-the-art supercomputer about 10,000 years.”

Says Mr. Ausubel, Director of the PHE: “We have climbed six times into higher valleys of silicon and similar substrates, but may be exiting the silicon valleys for landscapes of other materials and processes.”

“Qubit Gardens may await at the end of the present climb.”

The paper’s title refers to Gordon Moore’s famous 1965 observation that the number of transistors in microchips grows exponentially—doubling every 12–24 months (Moore’s Law).

However, the analysis of transistor density revealed a more complex pattern of serial waves of growth with each technological phase lasting about nine years in total before saturation and replacement with a new one.

Dr. Burg, also affiliated with Tel Hai College, Israel, says the new work reveals important subtleties within a technological phenomenon that has fuelled world progress for two generations.

The work drew on models developed for study of growth with complex feedback leading to limitations in density used previously in such research, he adds, and shows their power to illuminate the complex evolution of diverse machinery.