In recent years, numerous roboticists worldwide have been trying to develop robotic systems that can artificially replicate the human sense of touch. In addition, they have been trying to create increasingly realistic and advanced bionic limbs and humanoid robots, using soft materials instead of rigid structures.

Despite their texture-related advantages, robotic hands made of soft materials are often unable to collect a wide range of sensory information. In fact, replicating the complex biological mechanisms that allow humans to gather tactile information about objects has proved to be highly challenging so far.

Researchers at Beihang University in Beijing have recently developed a new tactile sensing technique that could be applied to robotic fingers made of soft materials. This mechanism, introduced in a paper pre-published on arXiv, is inspired by proprioception, the biological mechanism that allows mammals to perceive or be aware of their body’s position and movements.

“The idea behind our recent paper is based on the proprioception framework found in humans, which is what determines our body position and load on our tendons/joints,” Chang Cheng, one of the researchers who carried out the study. “Think about when you put a blindfold on and cover your ears, you can still feel your hand posture, arm position, or how heavy a grocery bag is; this ability is known as proprioception. We have been working on a prosthetic hand research project and we are looking for ways to address the lack of sensory feedback in existing prosthetic hands.”

In the past, robotics researchers did not typically correlate proprioception with the sense of touch. In fact, the human mechanism of proprioception does not allow for particularly precise responses, which is probably why humans do not use it to recognize the texture of objects or surfaces.

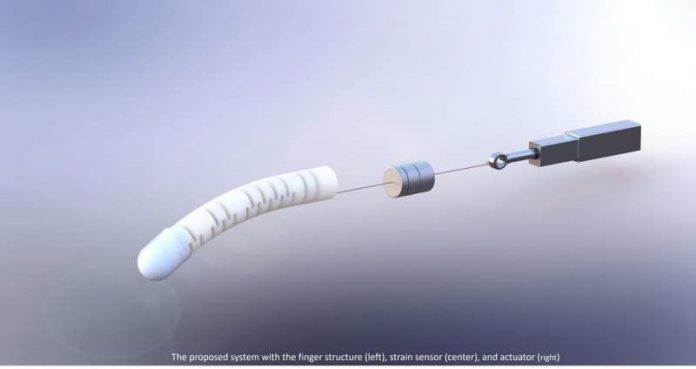

As industrial sensors are far more sensitive that human proprioceptors, however, applying them to robotic fingers could help researchers to gather more precise tactile sensory feedback. The prototype system created by Cheng and his colleagues is comprised of a linear actuator, a tendon (or cable), a strain sensor and a soft robotic finger introduced in one of their previous papers.

“The tendon connects the finger to the actuator and the strain sensor is installed in the middle of the tendon,” Cheng said. “When the actuator is driven, it pulls the tendon, which causes the finger to bend/straighten, and the strain on the tendon changes accordingly. When the finger touches different objects, the sensor would output series of strain signals that characterize the touched objects.”

Essentially, the technique devised by the researchers extracts features from the sensor’s reading. Subsequently, it uses machine learning tools to decipher the texture and rigidity of the surface or object that the robotic finger is touching.

Cheng and his colleagues evaluated their tactile sensing technique by running a series of tests using the prototype system they created. They found that their technique could decipher the texture and stiffness with high levels of accuracy (100% and 99.7%, respectively).

“Most existing research about innervating bionic fingers proposed to installation of sensors on the fingertip surface,” Cheng said. “While these studies have yielded promising results, they require exact contacts between the fingertip sensors and the objects, which often cannot be ensured in practice. A key advantage of our study is that the sensing unit is on the tendon, thus contact from anywhere on the finger will result in a characterized signal output, which may be utilized to infer tactile information.”

The new tactile sensing method introduced by this team of researchers is based on the embedding of sensors on a robotic tendon, an approach that had never been tested before and that they found to be highly promising. In the future, the system they developed could be used to develop more advanced robots and prosthetic hands that can gather tactile and proprioceptive feedback without requiring perfect or exact contact with a surface.

“We are now exploring the slippage detection capabilities of this system,” Cheng said. “When we humans manipulate or grasp things, slippage is almost unavoidable, therefore detection and control of slippage is crucial to robust and reliable controls. So, we believe slippage detection would be a nice feature to add, and our preliminary experiments showed really promising results.”

In addition to developing their system further, the researchers are collaborating with a renowned nanotechnology lab on the development of a low-cost tactile sensor that can sense force/torque signals and could be placed on robotic fingertips. They already created a few prototypes of this device and are now evaluating its performance.